Academic projects showcase

Projects developed by undergrad students using neuromorphic sensing, algorithms, and processing hardware.

Spiking Gesture Recognition on Event-based Camera Data

This project implements a Spiking Neural Network (SNN) for hand gesture recognition using the IBM DVS Gesture Dataset and live data from a Prophesee Neuromorphic Event-based Camera. The approach employs a convolutional SNN architecture with heterogeneous Leaky Integrate-and-Fire (LIF) neurons.

Team:

- Maximilian Werzinger - werzingerma89360@th-nuernberg.de

Event-based Neuromorphic Vision for Saxophone Player Posture Analysis

NeuroPose is a real-time, computer-vision-based posture correction system designed for musicians (specifically Saxophone). It utilizes MediaPipe for pose estimation and supports advanced Event Cameras (FireNet/Prophesee) alongside standard webcams to provide immediate visual and haptic feedback.

Team:

- Alexander Lorenz - lorenzal100383@th-nuernberg.de

- Tobias Mack - mackto106280@th-nuernberg.de

Spiking DQN Agents to learn control states

This project replicates the brain’s ability to infer motion without explicit speed data by training a spiking neural network (SNN) agent—deprived of velocity inputs—to internally reconstruct missing environmental physics using its membrane potentials as short-term memory. Through Backpropagation Through Time, the SNN-based Deep Q-Network learned to encode velocity in its hidden layers, effectively developing internal "speedometers" from spike timings alone.

Team:

- Robin Feldmann - feldmannro80685@th-nuernberg.de

- Maximilian Arnold - arnoldma80620@th-nuernberg.de

YOLO Deep Neural Conv Net on Event-based Camera Traffic Tracking

The Neuromorphic Traffic Training project aims to compare various approaches for obtaining event-based data—including self-recording, sensor-based capture, and conversion/simulation methods—by training Artificial Neural Networks (ANNs) and converting them into Spiking Neural Networks (SNNs). The focus is on developing models that can effectively track diverse traffic actors, such as pedestrians, cars, and motorcycles.

Team:

- Mark Franz - franzma84803@th-nuernberg.de

- Laurin Kerntke - kerntkela84836@th-nuernberg.de

- Jonathan Pohl - pohljo85440@th-nuernberg.de

Hybrid ArtificialNN and SpikingNN for closed-loop motor control

Using the LuI silicon neurons in a spiking neural network converting the sound processing output of an ANN into spike trains to move a servo motor. Another ANN classifies the spike trains to determine the motor direction.

Team:

- Selin Schmitt - schmittse79503@th-nuernberg.de

- Timon Löwl - loewlti80780@th-nuernberg.de

- Sven Remy - remysv80967@th-nuernberg.de

Spiking Silicon Neurons for Text2Morse Conversion

Neuromorphic data encoding and decoding using LuI silicon neurons for implementing an efficient Text2Morse converter based on spike trains.

Team:

- Tan Phat, Nguyen - phatnguyen@gmx.de

- Paul Schmachtl - paul.schmachtl@outlook.com

Event-based camera spatial and temporal clustering for predictive maintenance

Using a neuromorphic event-based camera to design and develop a spatial and temporal clustering algorithm for frequency detection used in machine state estimation and predictive maintenance.

Team:

- Benedikt Fischer - fischerbe98484@th-nuernberg.de

- Felix Sixdorf - sixdorffe80095@th-nuernberg.de

- David Stiegler - stieglerda78912@th-nuernberg.de

Spiking PID Controller for Mobile Robot Trajectory Tracking

Neuromorphic PID implemented using Nengo to control a differential mobile robot trajectory tracking performance under uncertainty.

Team:

- Adrian Stangl - stanglad98626@th-nuernberg.de

- Bastian Wunderlich - wunderlichba98628@th-nuernberg.de

Follow the leader robot swarm

Neuromorphic vision based frequency tracking algorithm and closed-loop control. Embedded processing for collaborative mini-robots.

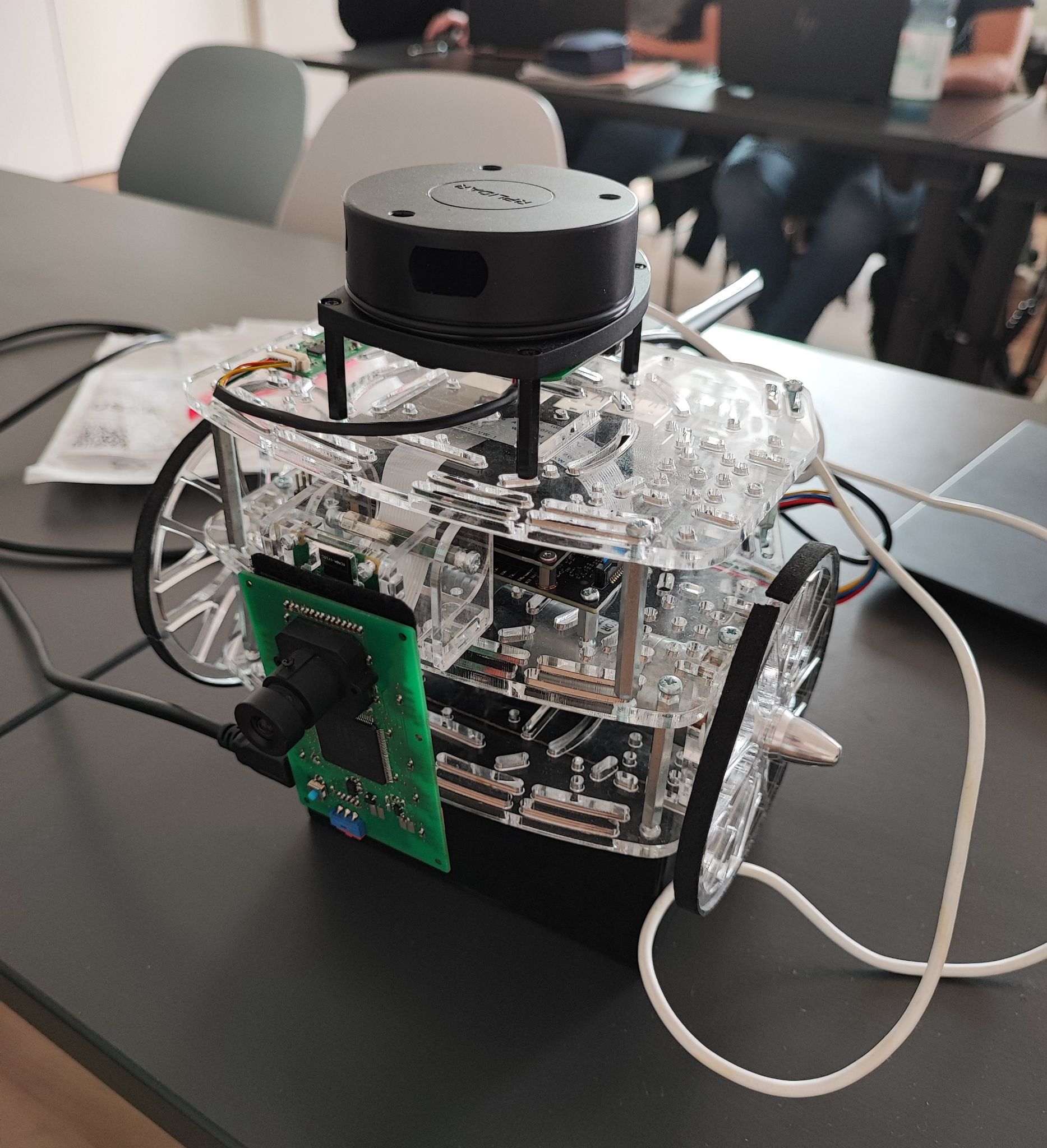

Scene understanding for robot motion

Neuromorphic visual sensing fusion with LiDAR for scene understanding and planning for mobile robot motion control.

Camera-based instruments for karaoke

Users can accompany their favourite songs instrumentally by imitating selected instruments with gestures sensed using a neuromorphic vision sensor.

Team:

- Selin Schmitt - schmittse79503@th-nuernberg.de

- Kay Hartmann - hartmannka80488@th-nuernberg.de

Crowd-sourced visuals

Privacy-preserving club visuals generation based on synchronized neuromorphic video sensing and sound generation.

Team:

- Selin Schmitt - schmittse79503@th-nuernberg.de

- Kay Hartmann - hartmannka80488@th-nuernberg.de

Event-based camera-LiDAR fusion for clustering-based depth estimation

This project addresses the challenge of estimating depth using a single DVS (Dynamic Vision Sensor) camera and a 2D LiDAR. Built using ROS2 for communication and data processing, the system can run on various platforms, including wheeled robots with Jetson Nano or similar hardware. A custom clustering algorithm was developed to estimate the depth of objects outside the LiDAR's measurement plane, enhancing depth perception while maintaining affordability.

Team:

- Annika Igl - aiglgg@web.de

- Timo Kapellner - timo.kapellner@gmail.com